Scope of the project

The L3DAS project aims at providing new 3D audio datasets and software toolkits for the development of deep learning algorithms designed for 3D audio analysis. To this end, the project will focus on various immersive audio tasks, such as sound event detection and localization, sound source separation, speech recognition, speech enhancement, audio super-resolution, acoustic scene classification, acoustic echo cancellation and noise reduction, among others. Data collected using 3D recording microphones will be made available to the scientific community through a user-friendly framework developed in Python.

Motivation

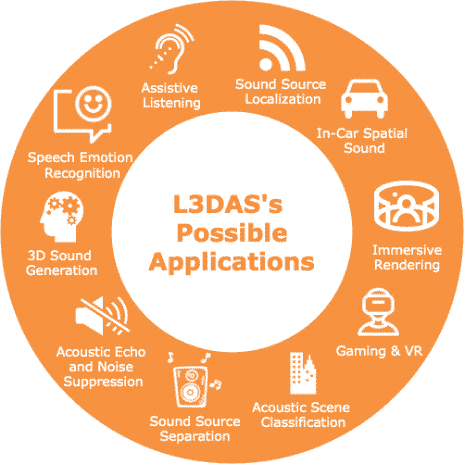

3D immersive audio is becoming a widespread reality thanks to the support of modern technologies and commercial devices. 3D audio sources can be experienced in speech communication, interaction with home assistants, multimedia services, audio surveillance in public environments, gaming and entertainment, speech emotion recognition, 3D sound source separation, and many other possible applications.The growth of 3D audio has opened new and interesting advances also from the scientific point of view, since new deep learning methodologies can be developed for the analysis of 3D audio signals. However, to develop efficient deep learning algorithms it is necessary to have a significant amount of data, which is not always available for 3D audio applications. The L3DAS project aims at filling this gap and encouraging the proliferation of new deep learning methods for 3D audio.

Project Timeline

The L3DAS project is currently under development. A first public release will be available during the Spring 2021 with 2 tasks. A more exhaustive release including several tasks related to 3D audio will be ready for the beginning of 2023.